Weeknotes-21-10-15

Much of this week at work felt like settling back into the usual rhythm.

Monday: meetings and catching up with things and people, putting out minor fires and preparing for the week ahead.

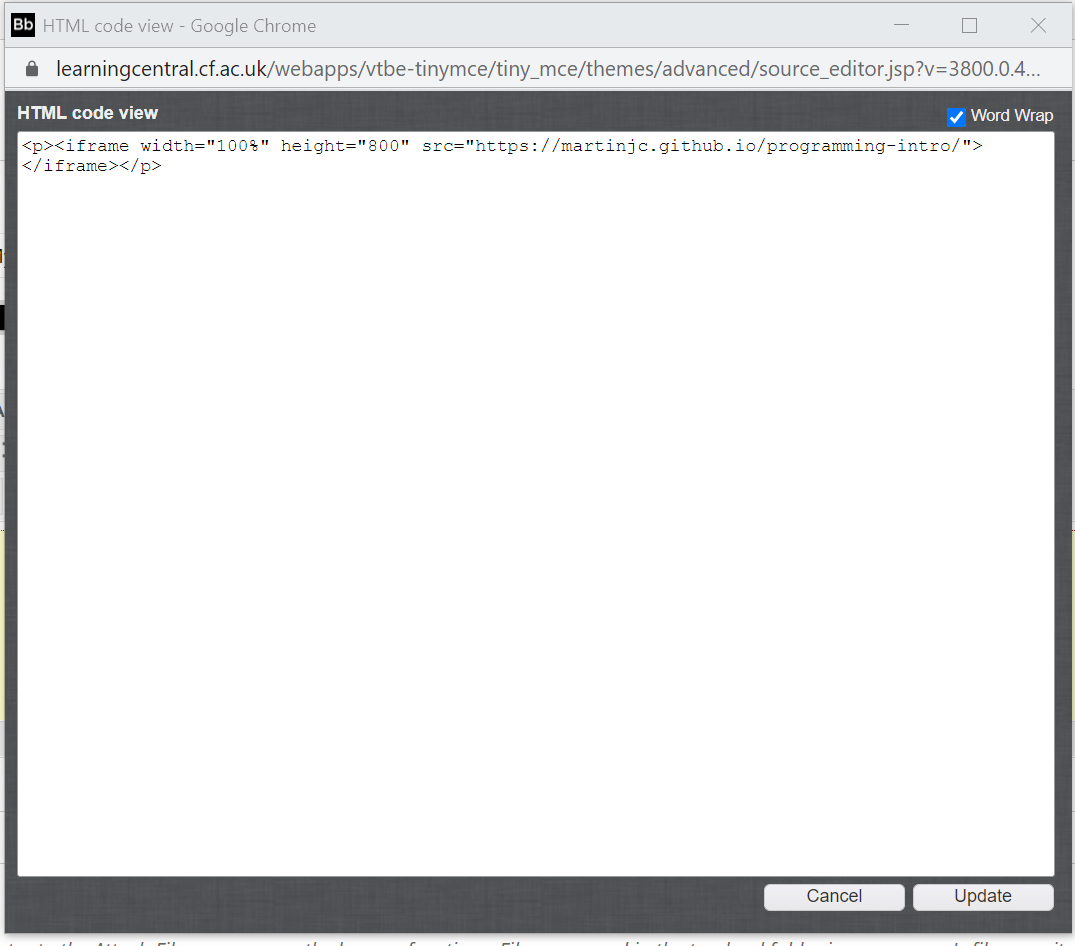

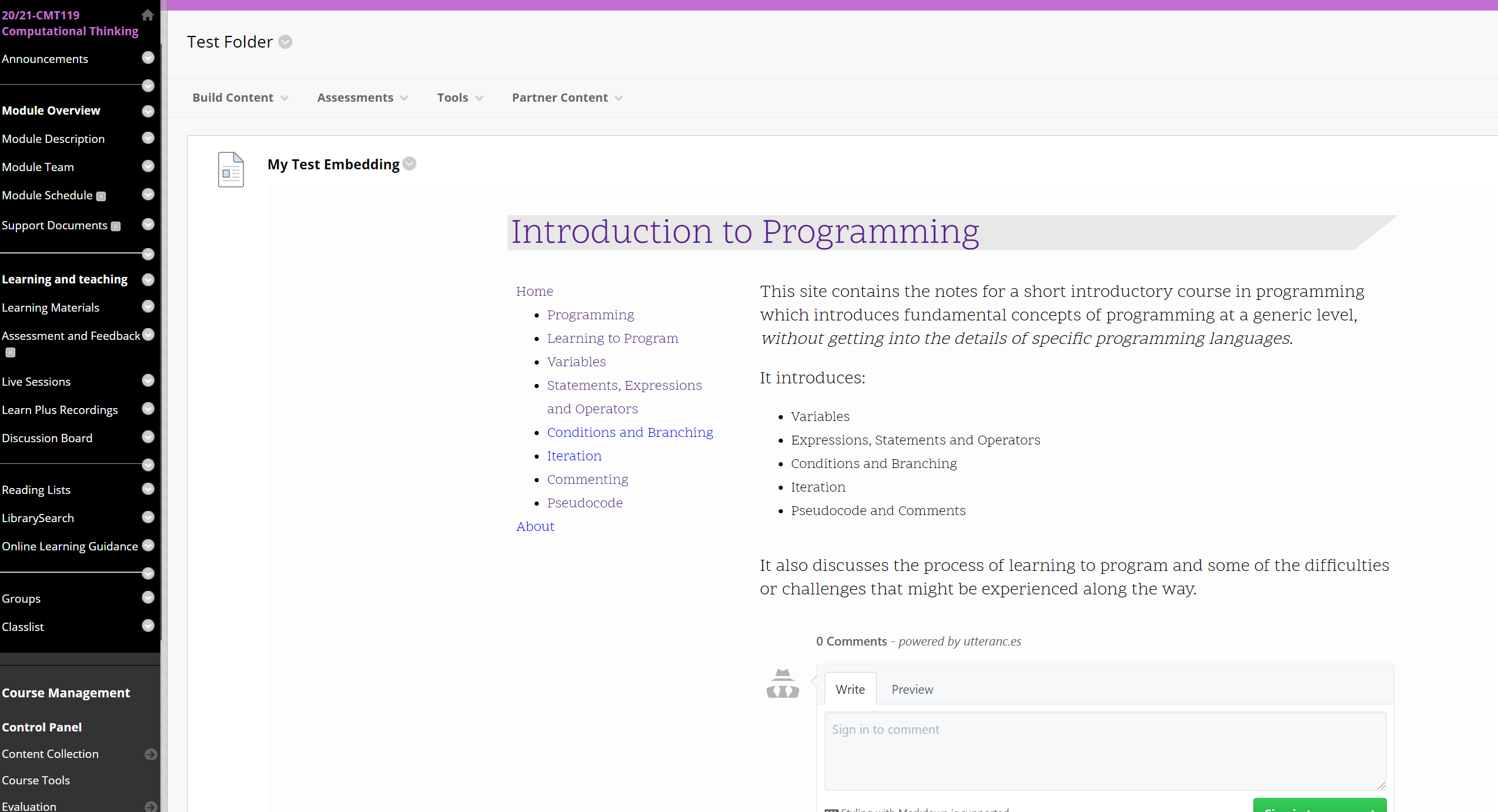

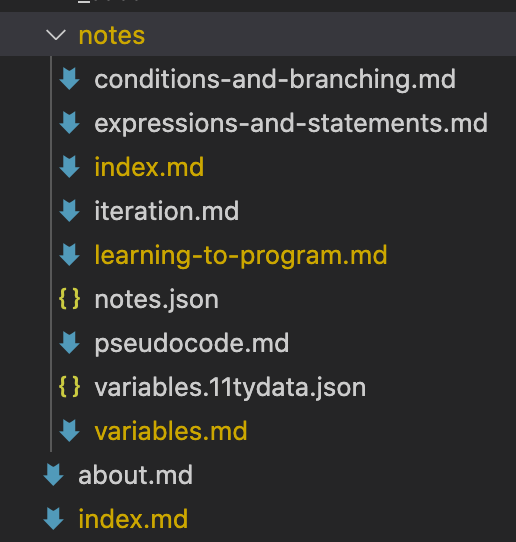

Tuesday: I spent the day online with students, doing the first ‘programming’ session practicing problem solving with a fake programming language. Last year’s students had christened this fake language ‘MartinScript’; this year’s went with ‘BennyScript’. I have not taken this personally. The session itself seemed to go well, there was good engagement, and some of the students even carried on afterwards to attempt the problem solving with a real programming language, which was awesome to see.

Wednesday: CompJ Lab + the usual Wednesday meetings.

Thursday: another day on site, this time introducing the assessment for the Computational Thinking module.

Friday: possibly the most interesting day of the week. 4 open forums with different groups of students, where we were able to talk about some of the things we’re interested in as a School, but also hear some of the issues students are facing, and start to solve them. The turnout varied a bit between the years, but everyone who turned up had some interesting and useful things to say, and by the end of Friday afternoon I’d already managed to put out a few minor fires around the place. A tiring day though, spent almost solidly in Teams meetings (especially when you throw in a DLT forum with the PVC halfway through the day) - by the end of the day I was horizontal on the office sofa with the sleeping pup, attempting to send emails without falling asleep myself.

Overall then, a strangely ‘standard’ week. A couple of work highlights (apart from the teaching) were around some input I managed to have into a couple of new things on the horizon: I managed to make a positive impact on some potential new job roles, and on some curriculum development guidance. Small wins, but I’ll take them where I can.

Outside of work though, a really positive week. Having been accustomed to being a solo runner for a very long time, I realised when parkrun returned that actually I do enjoy the social side of running, and having people around while running is good for me. So, I took the plunge and joined a local running club. I originally signed up for two trial runs: a training session on Monday and then a conversational run on Wednesday, thinking I’d make a decision about joining once I’d done them both. Monday night we did hill sprints on a hill in Penarth and I had such a good time I paid my subs the minute I got home and signed up. Wednesday was a really pleasant gentle run around strangetown where I managed to talk to quite a few other people and had a very nice time. I’m looking forward to having a little bit of a more regular structure to my running (which I’ve missed since stopping the running commuting) and also to having more social things going on.

riding or running or that

Did Parkrun at Grangemoor on Saturday, another 30 seconds off the time, so we’re getting closer…plus there’s that whole ‘running club’ thing.

Did the really really ‘old’ commute a couple of times to get to the Queen’s buildings for in-person teaching. Turns out I can cycle between the two sites in about three minutes, which is very handy when you get caught talking in your office in Abacws five minutes before you’re due in class in Queens…